By signing in or creating an account, you agree with Associated Broadcasting Company's Terms & Conditions and Privacy Policy.

By signing in or creating an account, you agree with Associated Broadcasting Company's Terms & Conditions and Privacy Policy.

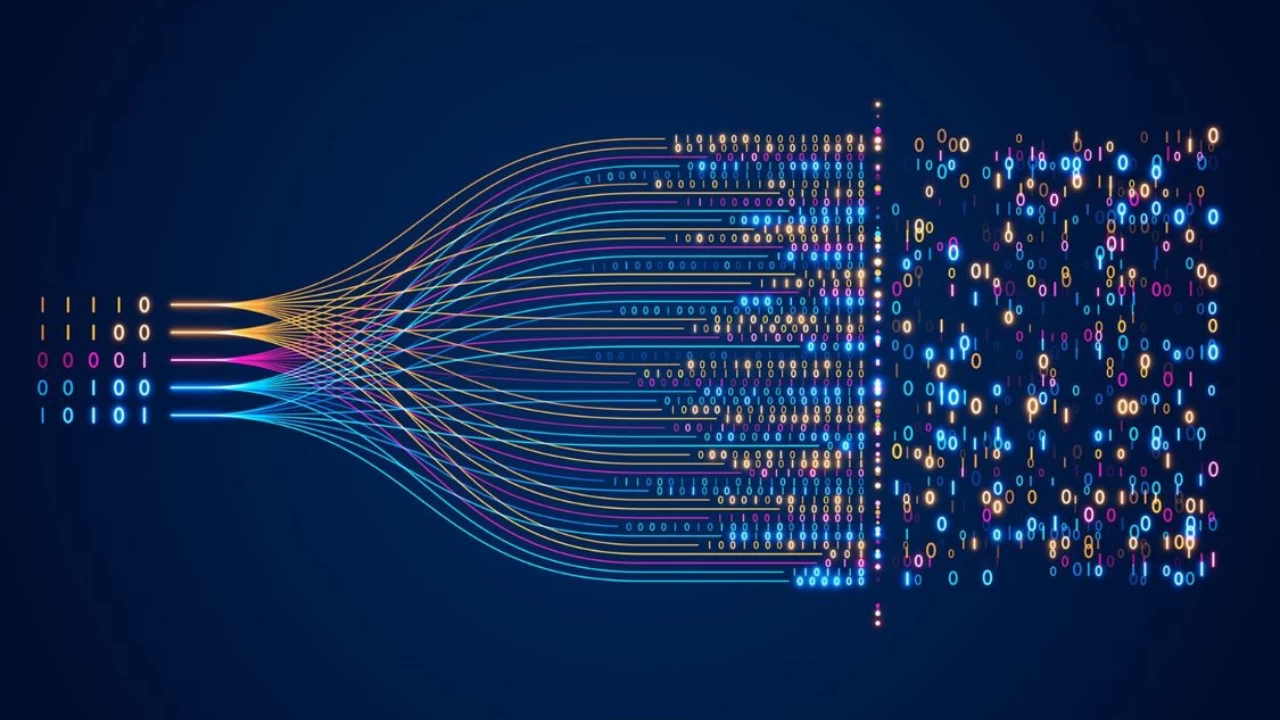

Researchers have found that large language models (LLM) may reflect the complex visual scene processing of the human brain. The innovation is the work of a team of researchers in an international collaboration including the Université de Montréal, the University of Minnesota, and the University of Osnabrück and Freie Universität Berlin in Germany. The research, published in Nature Machine Intelligence, shows that AI can not only recognise the objects in a scene but can also understand the meaning of what we see.

Researchers discovered that when one feeds natural scene descriptions into LLMs, they can generate what they call language-based fingerprints of the meaning of a scene. These fingerprints were very similar to the brain activities captured on MRI scans as the participants were shown the same scenes. The discovery creates new possibilities of decoding thoughts and constructing more powerful brain-computer interfaces and more intelligent AI vision systems.

The team relied on the descriptions of common scenes, such as children playing or a city skyline, to create LLM fingerprints. These patterns were highly aligned with neural activity, indicating that the brain and LLMs interpret the meaning of scenes in very similar manners. The authors even trained neural networks so that they could predict these fingerprints directly on images, beating most current AI vision models.

As lead researcher Ian Charest explained, the work may lead to superior visual prostheses to aid individuals with severe vision loss and to superior decision-making systems in autonomous vehicles. Learning more about how the brain forms a visual representation of meaning would also improve computational models and enable AI systems to see more like humans. The first author of the study, Adrien Doerig, stated that the result is a step in the direction of closing the gap between human cognition and machine perception.